The results of the 2016 Presidential election have surprised many and caused people to call into question how accurate polls are. There are endless think pieces about how the polls were wrong and how people were lulled into a false sense of certainty, but what seems to get overlooked in all of these conversations is the probabilistic nature of polling. That's what I want to delve into a little deeper and understand better.

Before going too far, it should be said that conducting an unbiased poll is an incredibly hard thing to do. You need to sample a representative cross section of the electorate, and that's becoming harder as the available ways to contact people are fragmenting due to technology. There are many other causes for polls giving unexpected results, but what I'm really interested in is what the underlying mathematics tells us about the results of a poll when all these other effects are stripped away.

From this point on we're going to look at a simplistic poll where we ask people if they prefer Apples or Bananas, and we'll consider a response for an Apple as positive response. We also need to ask the question "what are we trying to discover with this poll?". The answer to that is we are trying to determine the proportion of the public that prefer Apples to Bananas. We don't know what that number is. It's a hidden parameter of the population that we are trying to discover through random sampling.

Let's define some variables, do some math and take a poll. It won't be anything too formal. I'm not labelling my graphs, this is just a casual chat between friends.

p is the probability of a positive response for an Apple

n is the number of people surveyed for the poll

k is the number of positive responses for an apple

P(k;p) is the probability of k responses given the probability of a single response is p

|

| Binomial pmf |

|

| Binomial pmf range |

The probability mass function for a series of Bernoulli trials is the the binomial function. In this case it is telling us the probability of a certain result given that we already know the underlying probability parameter, p. However, we don't know p, so it's more useful to look at the same equation but this time with the results of a series of trials. Given that we know the number of positive results, what is the likelihood of the parameter p being equal to a value? The new equation L(p;k) is referred to as the likelihood function, and it doesn't follow the same rules as a probability density function, but it does allow comparison of relative likelihoods.

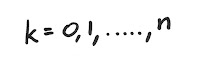

|

| Likelihood Function |

For example: 100 people are polled and 35 people say that they prefer apples to bananas. This gives a likelihood function of:

|

| A poll of 100 people with 35 positive responses |

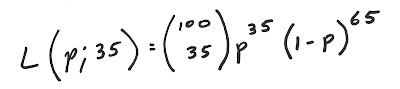

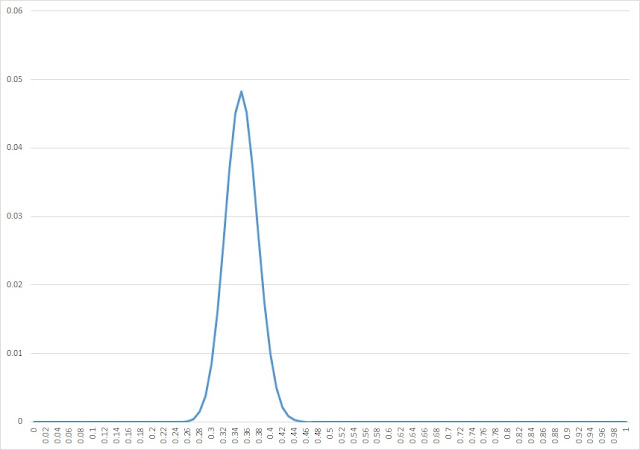

When the above likelihood function is graphed we get the following.

|

| A poll of 100 people with 35 positive responses |

From this we can see that the relative likelihood that p is 0.35 compared to 0.3 is around twice as likely but the possibility isn't remote. We can visually see that p could be anywhere between about 0.27 and 0.43 and you wouldn't be surprised. What if we conduct more polling? Let's say we poll 300 people.

|

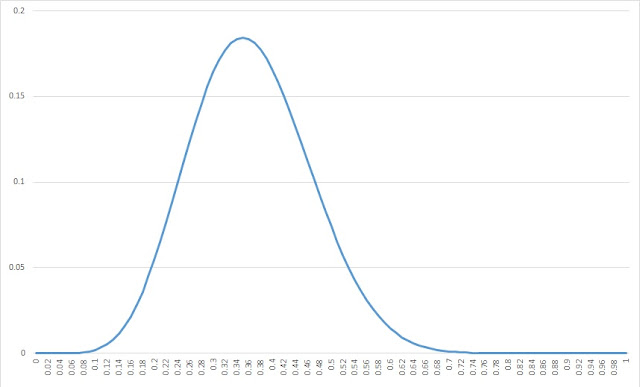

| A poll of 300 people with 105 positive responses |

You can now see that the peak of likely values has now tightened, and we can more confidently say that the value of p likely lies between 0.3 and 0.4. This shows how additional polling allows the margin of error to be reduced. If you're astute you may have noticed that the results of the polls don't rely on the size of the population being polled. As long as you select a representative cross section of society the size of the population is irrelevant. You may also be interested in what happens if a relatively small number of people are polled. This can be seen when you poll 20 people.

|

| A poll of 20 people with 7 positive responses |

It wouldn't really surprise you if p was between 0.18 and 0.56 a pretty wide range. and doesn't reveal too much about the actual value of p. It's also interesting to note that all of the example above have 35% positive response rates and therefore p peaks at 0.35 for all surveys. Polling more people only increases confidence in our results. In reality the peak would move around a little bit with each new bit of data. However, the more people that you poll, the more confident you would be that about one in three people prefer apples to bananas.

There is a rule of thumb for margin of error in polling that states that 100 divided by the square root of the number of respondents is equal to the percentage margin of error. So for our 3 examples above the margin of error for 20, 100, and 300 people respectively are 22%, 10% and 6%. It's not uncommon to see a political poll of 2000 respondents, and using our rule of thumb above, it would still have a margin of error of 2.2%.

I suppose what I'm trying to get at is that even if you could remove all of the external human factors affecting the poll and you chose a perfectly random cross section of the population, your sample size will limit how accurately your poll determines the underlying parameters the population.

For all the talk about how every polling model got the result of the Presidential election wrong, remember that the 538 model gave this outcome a one in three chance of occurring. That isn't an insignificant probability. It's true that polling methods need improvement, but in this case I think the main problem is the way probabilities are communicated to the general public are too confusing. People don't intuitively understand what percentage probabilities mean. The IPCC have this problem and even have defined terms in everyday language to communicate probabilities to the public.

|

| IPCC likelihood scale |

I actually have some modelling problems in mind that I would like to work on one day, and until now I had always envisaged communicating them as percentages, but given the intended audience I think I might change my approach.

No comments:

Post a Comment

Note: Only a member of this blog may post a comment.