In my ongoing efforts to back up my files, I have a directory structure that I want to archive, but only if it has changed. The reason for this is that the back ups are compressed and every time they get changed the whole archive needs to be re-uploaded. Unfortunately the internet in Australia makes this a daunting task. So I need a way to know if a directory has changed. There are a couple ways I could do this, I could use time stamps to see if files or directories have been modified, or I could look at file contents. I decided to look at the file contents, and basically create a "hash" for files and directories. This would allow me to compare values over time.

Most people reading this would be familiar with taking the MD5 hash of a file and what that means. It gives you a fingerprint of the file contents, and if any of the contents change, the hash value changes dramatically. That's great, but it's only for files and it completely ignores the file name. To me, a directory structure has changed if even a file name has changed. I explored using something like an archiving format like tar to bundle up the directory structure with file contents and then taking a hash, but there's no guarantee that one implementation of tar will give exactly the same results as another, i.e. it's not deterministic. This would give different hash values and is useless.

To overcome these problems I came up with something that I think is reasonably simple that only takes into account changes in directory structure, file and directory names, and file contents when determining if something has changed.

- A directory structure can have files and sub-directories.

- A file hash is equal to MD5(MD5(file contents) XOR MD5(UTF-8 byte array of name))

- A directory hash is equal to MD5((directory contents) XOR MD5(UTF-8 byte array of name))

- The content of a directory is equal to the XOR of the hashes of all files and directories it contains in the level below it.

Let me just state now that I know MD5 isn't secure, this isn't a security thing, I just need a fast way to get a file checksum.

So with these basic rules I wrote a PowerShell script so that we can take the hash of files and directories to a create a fingerprint so that they can be compared to future versions. In the test below I created a directory structure with some test files to play around with. Some directories are junction points and symlinks. Some files are hardlinks and symlinks. The script can be configured to ignore junction points and symlinks, not hardlinks as these are indistinguishable from other files. I also threw in some unicode file and directory just to make sure every thing works as expected.

In the image below, each item in the directory structure has its own box with 4 different hexadecimal strings. The red string describes the content hash. If it's a file, that's just the normal MD5 hash of the file, if it's a directory it's the combined XOR of the all files and directories in the level below it. The green string is the MD5 hash of the byte array of the name of the file or directory. The blue string is the XOR of the content hash and the name hash. The black string with green highlighting is the MD5 hash of the XOR result. (I'll get back to why this is done later)

|

| Calculating a directory hash |

The implementation isn't too hard. First create a function that calculates our version of a file hash. Then create a function that can create a directory hash that calculates the hash of all items in it, along with the other operations needed to create the directory hash. By recursion this will then explore the directory tree.

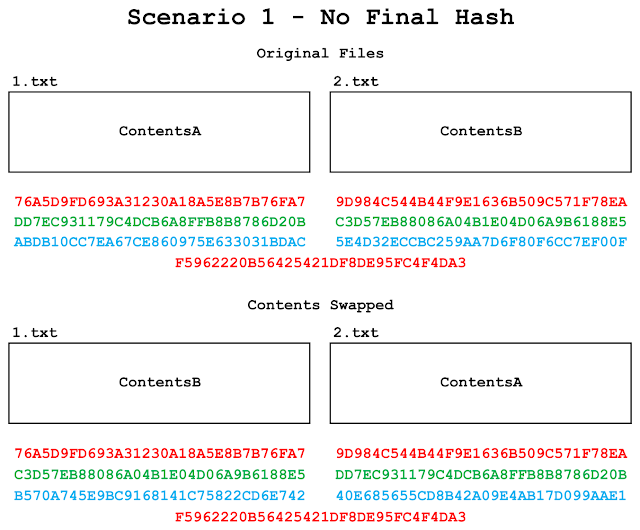

It may seem excessive to perform a hash on the result of the XOR value, but in the scenario below I'll show how you can get the same hash for two different directory contents if you don't do it.

|

| No final hash can lead to different directories with equal hashes |

You can see in the image above that if you swap the content of two files and don't do a final hash you can end up in a situation where they can give equal content hashes and if they happen to be in directories with the same name, those directories will have the same hash value. Hashing the values of the XOR prevents this as can be seen below.

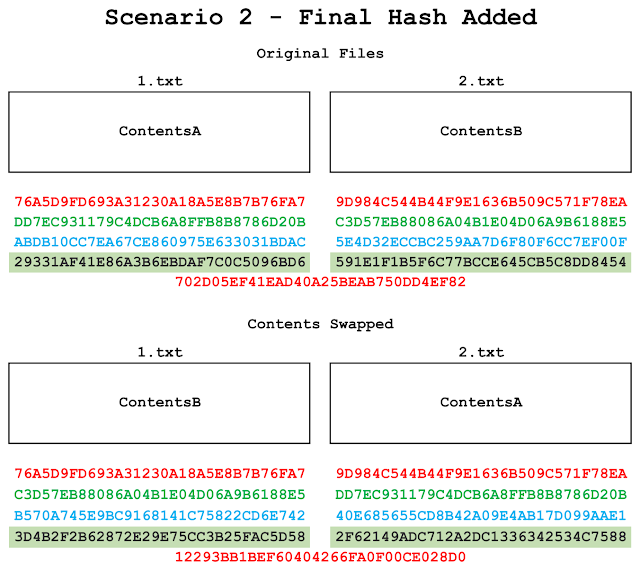

|

| Adding a final hash leads to directories with different hashes |

You can now differentiate between the directories as they have different hashes. The final MD5 operation basically "scrambles" the information of the content and name hash before it can propagate to the level above. Without it, and because of the associative and commutative properties of the XOR function you can end up with equal XOR results.

|

| Get the code! |

No comments:

Post a Comment

Note: Only a member of this blog may post a comment.